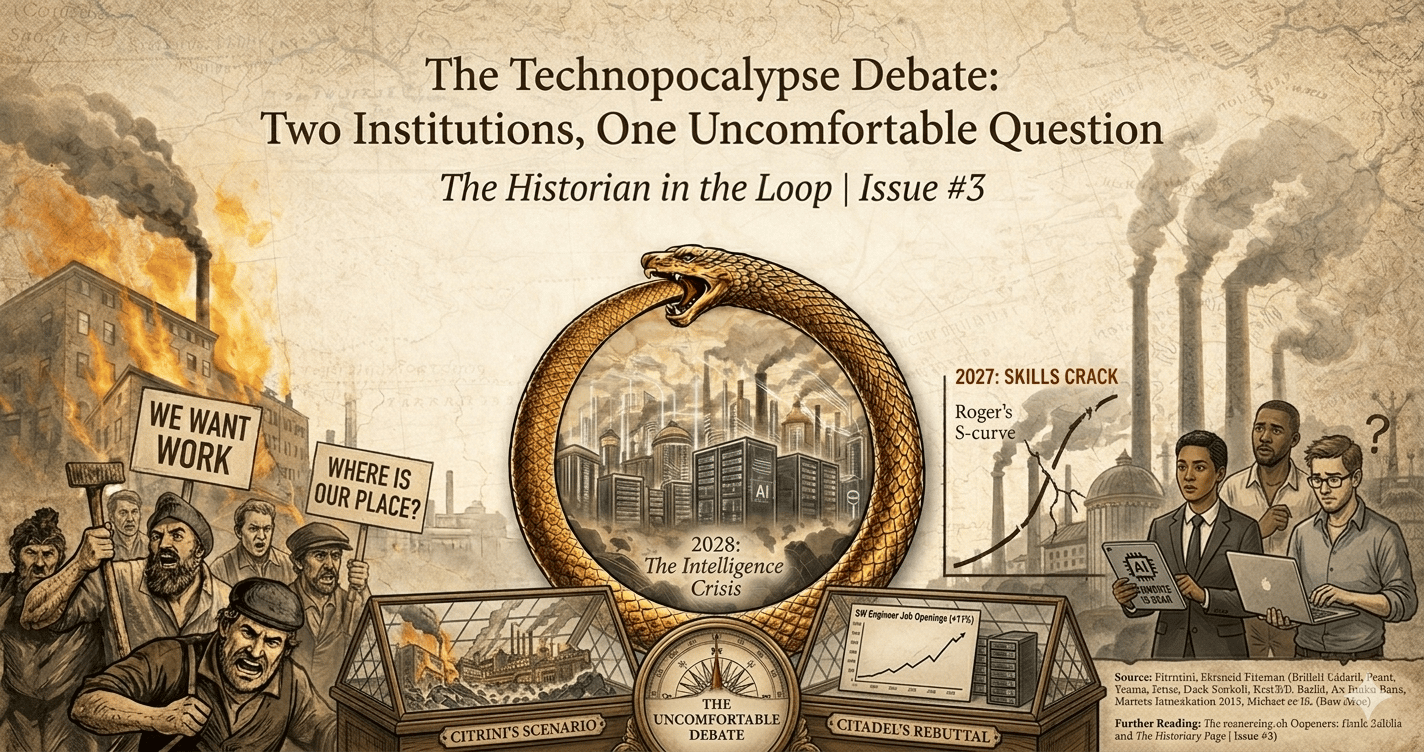

In my last issue, I touched on the thought experiment by Citrini Research. What if AI succeeds too well and destabilizes the very economy it's supposed to improve? What happens when a technological wave this powerful arrives without anything to break it?

This week, Citadel Securities published a direct response: a nuanced, data-driven rebuttal to Citrini's doom scenario.

Two serious institutions. One uncomfortable debate.

So we continue the narrative: what do both articles actually argue, where does each fall short, and what do I actually think about the skills you'll need to survive the technopocalypse?

Because, we've been here before.

Citrini's hypothetical scenario is written as a "post-mortem" from mid-2028. It proposes a detailed case of what happens if unchecked AI adoption creates a self-reinforcing "human intelligence displacement spiral" that eventually leads to economic collapse.

The scenario unfolds in three phases. The setup (2025–2026): in late 2025, agentic coding tools enabled people to replicate SaaS tools with unprecedented speed, sparking a knife fight in the SaaS industry. Threatened firms adopt AI aggressively, cutting costs and reinvesting savings into more AI. It's a similar reaction to the farm mechanization of the 1920s — see issue #2.

Then the spiral accelerates in 2027. Agents are deployed and integrated everywhere, optimizing shopping and eliminating subscriptions. They dismantle intermediation across travel, insurance, and real estate. The labor market starts to crack. AI replaces the tasks that humans would normally move into. While the farmers of the 1930s could make cars in Detroit, there is no obvious landing spot for white-collar workers — they flood other markets, leading to overcapacity across many sectors.

Even though the overproduction looks good on paper and nominal GDP grows, consumers hold onto every penny as income evaporates — creating a ghost GDP. Mortgage markets falter, financial contagion spreads, and with no policy brake in sight, the crisis spirals into something worse than the Great Depression.

Citadel Securities presents a different picture. It challenges the doom narrative by looking at the most recent data. Job postings for software engineers are actually rising — up about 11% compared to previous years. Data centers are booming. And according to the St. Louis Fed Real Time Population Survey, AI use at work is growing, but the intensity of daily use has remained surprisingly stable. Yes, AI is being adopted and used — but there is little evidence of any imminent mass layoff risk.

Citadel also argues that the AI Ouroboros eating itself doesn't mean the broader economy follows the same trend. In issue #1, I already argued that adoption follows Rogers' S-curve: a slow start, acceleration, then plateau. Citadel adds another point that is, in my opinion, exactly right: AI requires compute power, and those resources aren't fully here yet. As long as the cost of compute remains higher than the cost of human labor, mass displacement won't happen at scale.

That doesn't mean the market isn't shifting. Citadel argues that when technology makes production cheaper, prices fall, allowing people to buy more with the same money, which circulates back into the economy. Steam power, electrification, computing — all followed this pattern. In 1930, John Maynard Keynes predicted that productivity would make everything so cheap that humans would only need to work 15 hours a week. He was right about the productivity boost. But he underestimated something fundamental: humans always want more. Even in the age of AI, new industries will rise, new wants will emerge, and new jobs will follow.

While both Citrini and Citadel make interesting points on whether aggregate demand survives, neither asks who specifically loses and who specifically gains. Those aren't the same people. Historically, they never have been. We are too focused on the economic impact of new technologies — what changes for us as humans is, in my opinion, far more essential.

So what do I actually think?

Over the past weeks I've been analysing these trends through various LinkedIn reactions and conversations. Here's my vision on what's actually changing, both organizationally and societally.

Traditional front end and back end roles become obsolete. What emerges instead are builders, code validators, and specialists in integrating new code into legacy systems. Product managers consolidate with developers, asked to build and manage product vision simultaneously. The developers who thrive are the ones who can talk to clients, deeply understand their technical needs, and help implement. The forward deployed engineer.

On the organizational level, I believe we will see massive change, especially in enterprise. The management layer will be greatly reduced as organizations strive for efficiency. More direct contact within teams, less bureaucracy, less overhead. At the same time, new businesses will rise as entrepreneurs claim market share by being faster, leaner, and more willing to take risks.

Amid the Forward Deployed Engineer hype, a quieter shift is underway that is personally very dear to me: a renaissance for generalists with humanities backgrounds. These backgrounds anchor AI's ethical and human dimensions. These aren't soft skills add-ons — they're the hard constraints models fundamentally lack: deep contextual understanding, moral intuition, and foresight into unintended consequences. Daniela Amodei, cofounder of Anthropic, advocates for this directly. She argues that communication, EQ, kindness, compassion, and curiosity will become more important in an AI world, not less — because these qualities augment AI rather than compete with it. And the humanities study what is, in my opinion, the most important question of all: what makes us human, how we react, how we thrive, how we communicate.

And this matters beyond the workplace. On the societal level: loneliness is rising. A wave of anti-AI sentiment is coming, not because people hate technology, but because they want to work with other humans. This creates demand for third spaces. More meetups, more online communities, new kinds of collaboration focused on solving immediate local problems. Generalist World is a living example of this already happening.

As I was finishing this issue, Anthropic published their own labor market research. No mass unemployment yet. But hiring of young workers into AI-exposed roles is already slowing. The workers most at risk are older, more educated, higher paid — exactly the people who built careers assuming their skills were safe.

The Ouroboros isn't eating itself yet. But it's getting hungry.

And as history shows — by the time you can clearly see the spiral, you're already inside it.

We've been here before.

Sources & Further Reading

Citrini Research (2026). "The 2028 Global Intelligence Crisis." citriniresearch.com

Citadel Securities (2026). "The 2026 Global Intelligence Crisis." citadelsecurities.com

Anthropic (2026). "Labor Market Impacts of AI: A New Measure and Early Evidence." anthropic.com/research/labor-market-impacts

Amodei, D. interviewed in Fortune (2026). "Anthropic cofounder says studying the humanities will be 'more important than ever'." fortune.com

Rogers, E. M. Diffusion of Innovations (1962) — S-curve adoption model

Keynes, J. M. "Economic Possibilities for our Grandchildren" (1930)